A flag in the wind

Measuring material properties and wind force from real-world video

1 April 2020

The paper of Tom Runia and his colleagues Kirill Gavrilyuk, Cees Snoek and Arnold Smeulders with the title ‘Cloth in the Wind: A Case Study of Physical Measurement through Simulation’ will be presented at the yearly Conference on Computer Vision and Pattern Recognition (CVPR).

Physical scene understanding

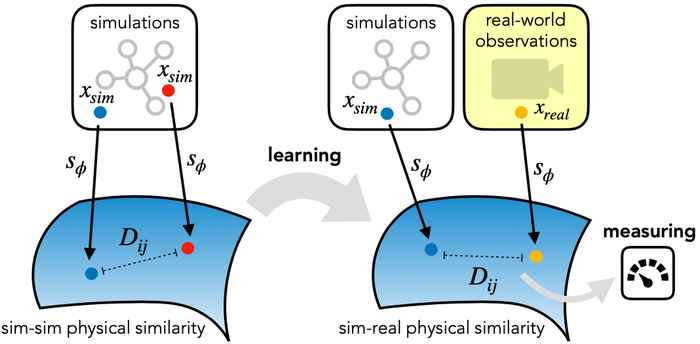

Inspired by the human approach of estimating physical properties in the world around, the proposed method relies on computer simulations for predicting physical phenomena. There is substantial evidence that humans run mental models to predict physical phenomena. We often make predictions about physical phenomena, for example we predict the trajectory of objects in mid-air, estimate a liquid's viscosity and gauge the velocity at which an object slides down a ramp. In analogy, simulation models usually optimise their parameters by performing trial runs and selecting the best. Over recent years, physical models of the world have become so visually appealing through simulations and rendering that the authors have reconsidered them for physical scene understanding. This alleviates the need for obtaining large amounts of real observations and the meticulous annotation of its characteristics.

Simulations and real-world observations

For many of the physical phenomena around us, we have developed sophisticated model explaining their behaviour. Nevertheless, measuring physical properties from visual observations is challenging due to the high number of causally underlying physical parameters – including material properties and external forces. In the article, Tom Runia and his colleagues from the Informatics Institute propose to measure causally underlying physical properties for cloth in the wind without ever having seen a real example before. The proposed solution is an iterative refinement procedure with simulation at its core. An algorithm gradually updates the physical model parameters by running a simulation of the observed phenomenon and comparing the current simulation to a real-world observation. The correspondence is measured using an embedding function that maps physically similar examples to nearby points. The researchers consider a case study of cloth in the wind, with curling flags as our leading example – a seemingly simple phenomenon but physically highly involved. Based on the physics of cloth and its visual manifestation, they propose an instantiation of the embedding function. For this mapping, modeled as a deep network, the authors propose a new neural network building block that decomposes a video volume into its temporal spectral power and corresponding frequencies. This enables the extraction of effective visual features from a video that are characteristic of the cloth's intrinsic properties and the external forces.

Flags Science Park Amsterdam

To assess the performance of the proposed model the authors perform experiments on estimating the intrinsic material properties of various types of cloth. Moreover, to evaluate the estimation of external forces, the authors have collected a video dataset of flags in the wind on the Science Park campus. Specifically, by hoisting an anemometer in one of the flag poles, the authors simultaneously gauged the wind speed while recording the flag's visual appearance with a video camera. The experiments demonstrate that the proposed method compares favourably to prior work on the task of measuring cloth material properties and external wind force from a real-world video.